Words: 861

Time to read: ~ 5 minutes

Welcome to T-SQL Tuesday, the monthly blogging party where we talk about a topic given to us by the host. This month, we have Steve Jones (blog | twitter) asking us about Dynamic SQL.

There are a myriad number of uses for Dynamic SQL – I’ve already read some of the published posts and I’m impressed with the range. (Yeah, I’m writing this late; I’m aware).

I’m aiming for something different. I want to talk about the things I believe Dynamic SQL should have. You can disagree with me if you’d like; I’d welcome it (as long as you can justify it) but here are my thoughts on writing Dynamic SQL.

DECLARE

To make a contrived example, I’ve taken Andy Mallons (blog | twitter) script to return SQL Agent Job statuses, and converted it to Dynamic SQL

I was going to apologise for how long and messy it looked but I realised that’s par for the course with Dynamic SQL.

SET

There is this maxim that I’ve heard bandied about regarding code:

Code is read much more often than it is written[…]

https://devblogs.microsoft.com/oldnewthing/20070406-00/?p=27343

There should be an addendum on that quote for DBAs:

DBAs troubleshoot Dymanic SQL more often than they write it

Probably others, but definitely me

Saying that, Dynamic SQL should have these items to help with that effort.

Proper Formatting

It’s extremely easy to write Dynamic SQL so that it comes out in one string. A hodge-podge of plus signs, variable assignments, and red text that sometimes it seems like a foreign coding language

>++++++++[<+++++++++>-]<.>++++[<+++++++>-]<+.+++++++..+++.>>++++++[<+++++++>-]<+

+.------------.>++++++[<+++++++++>-]<+.<.+++.------.--------.>>>++++[<++++++++>–

]<+.

The above code sample is apparently a working “Hello World” program in one of those languages.

Don’t do this, properly format your Dynamic SQL. It will help when the code shows up in your monitoring toolkits. You have them (and know how to use them), right?

To help check your formatting, Dynamic SQL should include…

A Debug Method

Troubleshooting is so much easier when you know what you are going to run. The amount of Dynamic SQL where you have to build that in your head while reading the code is ridiculous!

If you give a procedure filled with Dynamic SQL to a junior DBA, then you’re going to see how fast fear and resignation fills someones face.

It’s straightforward to create a debug method that shoots out the code that is going to be run. Secondary benefit is it ensures that you format your code properly because you can see how it is going to turn out

IF @debug_mode_on = 1

BEGIN

RAISERROR(N'%s%s', 0, 1, @job_sql, @nl) WITH NOWAIT;

END;

ELSE

BEGIN

EXECUTE [master].[sys].sp_executesql

@stmt = @job_sql,

@param1 = N'@ds_job_name AS nvarchar(128)',

@ds_job_name = @actual_job_name;

END;

A.O.B

There are a few other things that I like to add to Dynamic SQL but I will grant are not necessary. I’ll leave them to you to make up your own minds about.

Sanitise inputs

If the user passes in an object, ensure it’s there

/* Job existence check */

IF @job_name IS NOT NULL

BEGIN

SET @actual_job_name = (

SELECT

[name]

FROM msdb.dbo.sysjobs

WHERE

[name] = @job_name

);

IF @actual_job_name IS NULL

BEGIN

DECLARE @err_msg AS nvarchar(max);

SET @err_msg = FORMATMESSAGE(N'Cannot find any job labelled: %s', @job_name);

RAISERROR(N'%s', 0, 1, @err_msg) WITH NOWAIT;

RETURN

END;

END;

Escaping input

Same point really – if you want to raise a warning or error with what is passed in, use something that escapes user input.

I tend to use FORMATMESSAGE for these bits.

EXECUTE

These might appear to be overkill but I have an cough contrived cough example; the code I took from Andy’s github and converted to Dynamic SQL!

Quick Test with Debug

If we pass in no job name and leave debug mode on; it splits out the code sans any WHERE clause before the ORDER BY to bring us back all jobs:

If we change up the job name to one that does not exist e.g. @job_name = N'No existy'; then we verify that the job doesn’t exist and error out:

Passing in a job that does exist, then adds that job name to the WHERE clause (parameterised, of course):

Let’s Run It!

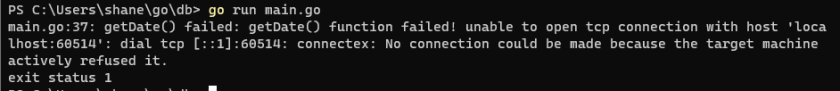

Turn off debug mode and…

That’s throwing up an error message – but I’d be confident in saying that anyone glancing over the code in the gist would miss the three mistakes.

Yes, three of them – I wrote one on purpose but created two more by accident.

Luckily we can turn debug more back on, grab the output, and parse it in a new window:

The AND on the JOIN clause was intentional – the terminating semi-colon before the WHERE clause was not, neither was the mistake on the alias. The joys of re-writing code.

Fix these mistakes up in our Dynamic SQL, turn debug mode back off, and ready to re-run? Probably took around 5 minutes going slow, but now when we re-run the code:

Result Set

That’s one example of why I have believe Dynamic SQL should have proper formatting, and debugging, and some small others.

I had more examples but they normally come to me around 02:00 in the morning while I’m on swearing and sweating on an incident call.

I’d prefer to fix and forget them, after I make sure they don’t happen again.